You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

ION Remote

- Thread starterJonverse

- Start date

Anvilx

Active Member

WiFi can be finicky and I'm not sure I'd want to rely on it for a focus session.

Yeah but with the money you saved I think you could afford to blanket the space with wifi.

Sent from my HTC Liberty

It's not the signal strength as much as the connection. The actual WiFi connection can get dropped very easily and is the primary reason ETC did not go this route with Eos/Ion. They had a WiFi system using HP Ipaq hand helds to connect to Obsession II or Emphasis systems. Besides poor battery life on the hand held, the connections were always problematic, thus they went back to proprietary radio hardware.

But I have to wonder as to WHY the WiFi systems would get flaky, as I sit here and type this on my laptop, while sitting on my front porch. I do occasional get a dropped WiFi connection here when the phone rings and that's a 5.8mhz. Go figure and perhaps Kirk at ETC could enlighten us as to why not WiFi.

SB

But I have to wonder as to WHY the WiFi systems would get flaky, as I sit here and type this on my laptop, while sitting on my front porch. I do occasional get a dropped WiFi connection here when the phone rings and that's a 5.8mhz. Go figure and perhaps Kirk at ETC could enlighten us as to why not WiFi.

SB

... But I have to wonder as to WHY the WiFi systems would get flaky, as I sit here and type this on my laptop, while sitting on my front porch. I do occasional get a dropped WiFi connection here when the phone rings and that's a 5.8mhz. Go figure and perhaps Kirk at ETC could enlighten us as to why not WiFi. ...

Why not WiFi? There are a couple of reasons.

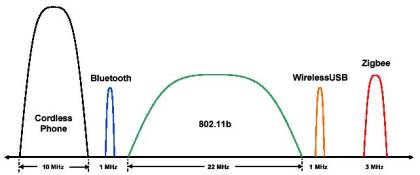

First, as you have experienced when your phone rings, the connection can be interrupted, (or phrased better for your scenario: the signal overpowered), with other devices because it relies on a wider spectrum of frequency range. That wider spectrum makes it easier for other devices to interrupt part of the bandwidth needed. WiFi falls in the 2.4GHz frequency range for the most widely used standards (802.11 B/G/N) and in the 5.8 GHz for the other commercially available routers (802.11 A/N). (Note that the 802.11N standard allows for the choice between 2.4GHz, 5.8GHz, or both based on hardware).

What other devices also operate in the 2.4GHz bandwidth? Some quick Google searching reveals that these devices all operate in that bandwidth:

Cordless Phones

Bluetooth Devices

Wireless USB

Zigbee Devices

Car Alarms

Also, microwaves tend to cause a lot of electromagnetic interference in the 2.4GHz band as well.

A pretty good article on avoiding interference in this range can be found at: Avoiding Interference in the 2.4-GHz ISM Band. While it is more focused on the development side (it is an electrical engineer's magazine) it does have a few useful graphics that explain some of the reasons interference happens.

But back to topic...

The second and more important reason that we do not use WiFi, especially for show critical applications, is that there is not enough time within the packet stream that we are relying on to account for dropped packets.

Think about how you use your computer to access the web. Generally you open your favorite browser, enter a URL, and wait for that website to load up. If you have a blazing fast connection, it loads pretty quickly. If you aren't so lucky, you may see parts of the page load and other parts wait to download images or other sections. Under the hood, the computer is loading and saving to a temporary location everything needed to display that page. If it misses something, it can simply ask for that packet again and it will get it. Once the page has loaded, you aren't actively using the network connection to stream that page to you, it is stored on the computer. That process can take a few seconds, but then you can scroll through the page because nothing on it is trying to load.

In lighting, we are constantly changing that information. Levels change, parameters change, settings change, outputs change, etc... instead of the "page" being loaded and stored to the computer, it is constantly refreshing as that data changes. There is not enough time to ask the console to resend the information, because, by that time, the entire page has changed already. So, if you miss information, you can become out of sync and not have the correct display. This is why, if you run an application such as the Eos/Ion Client wirelessly, you may see it synchronize the show file several times because it doesn't know what it missed, so it has to assume it needs everything.

So, in order to avoid those issues, the Net3 RFR uses a radio and receiver that is based on the Zigbee protocol. But Kirk, isn't that in the 2.4GHz band you were describing earlier? Yes, it is. Because the Zigbee radio has a narrower bandwidth per channel than WiFi, it is less prone to interference.

(See this image from the article linked above: )

We also employ frequency hopping technology, so while the main signal is centered on one frequency, it does hop around the entire 2.4 band in millisecond bursts to further avoid interference.

The iRFR app does rely on WiFi technology, and is thus more prone to interference and disconnects than the dedicated Net3 RFR.

Thus as Kirk as shown, iPad/iTouch/iPhone on WiFi is not going to be as reliable as the Radio Focus Remote and for professional applications, where I have to keep explaining to the designer that the "WiFi is flaky today" for why the focus call is getting bogged down..... well suddenly the $1800 starts paying for itself by not wasting labor.

SB

SB

I just read elsewhere that Digicom wireless headsets are known to interfere with RRFU, and are not very good anyway. Can anyone confirm either?

Does the RRFU use the same frequencies as the RFR?

EDIT:

RRFU transmits on UHF 914.5MHz, 1mW maximum;

Digicom: 902 - 928 MHz with over 256,000 codes.

So I can see where there could be issues. I was never happy with any 900 MHz cordless phone.

Does the RRFU use the same frequencies as the RFR?

EDIT:

RRFU transmits on UHF 914.5MHz, 1mW maximum;

Digicom: 902 - 928 MHz with over 256,000 codes.

So I can see where there could be issues. I was never happy with any 900 MHz cordless phone.

Last edited:

Kirk, I am new to the ION and the RFR, and am looking for some detailed info as to what the RFR can do. Where can I get this info?

I appreciate the help.

Jeremy

Auditorium Manager WBHS

The RFR gives you pretty good remote console functionality, including, but not limited to:

- Channel access and call up, including one button (or scroll wheel) channel check, channel range, etc...

- Address (DMX address/Dimmer) check, also one button, as well as multiple address call up

- Rem Dim (since it's a command line desk, you can pre-set commands for focus - Ch's 128, Full, RemDim" then wait to press Enter. Thus you have the next lamp/unit already up on the "Next" request. This is amazingly faster then on the old Express/ion Remote !)

- Patch

- Macro call up

- Cue operation functions, Go, Go To Cue, Stop/Back, Record, Trace, Time,

- Sub and Group access

- Fixture/Device Home function

Additional and practical info. as to use, it's a radio so you can roam within 300ft. of base station or so. It uses rechargable NiMh batteries (You can get replacements at Radio Shack), has a USB/WallWart charger, pretty standard and easy to find item (comes with the charger). Battery life is a couple hours. The base station plugs to a USB port on the console (or a powered hub off the console) and doesn't need local power, so that makes it easy to setup.

The RFR Adobe sheet has some info., more can be found in the Ion/Eos manual: Lighting solutions for Theatre, Film & Television Studios and Architectural spaces : ETC

One thing it doesn't currently do and that I wish it did, was control the pan/tilt of movers via the 2 scroll wheels on the L & R side of the unit. That would allow remote focusing. Maybe someday ?.

Anything else you need to know ?

Last edited:

What SteveB said.

Also some direct links for you:

Net3 RFR DataSheet

Ion Manual (See Pages 324-333 for RFR operation)

jon geller

Member

anyone know what the difference between the LR and BTS apps is?

Similar threads

- Replies

- 3

- Views

- 1K

- Replies

- 2

- Views

- 1K

Conventional Fixtures

Phoebus Ultra-Arc/GE Marc-350 question

- Replies

- 5

- Views

- 1K

- Replies

- 4

- Views

- 2K

Users who are viewing this thread

Total: 1 (members: 0, guests: 1)